What key elements do we need to apply Large Language Models in radiology?

Introduction to Large Language Models

Large language models are sophisticated artificial intelligence models created to comprehend and produce text that resembles human speech, such as GPT-3 (short for “Generative Pre-trained Transformer 3”). Massive amounts of data from the internet, including texts from books, papers, webpages, and other textual sources, are used to train them.

The training process of large language models involves two main steps: pre-training and fine-tuning.

1. Pre-training

During this phase, the model is taught to anticipate the following phrase or paragraph in a given sentence or passage of text. It accomplishes this through a method known as unsupervised learning. The model attempts to comprehend the patterns, syntax, context, and meaning in the text after being exposed to billions of sentences from diverse sources. It gains a profound comprehension of language and learns to connect words and phrases. On a significant number of powerful computers, the pre-training step is carried out using specialized hardware, such as graphics processing units (GPUs) or tensor processing units (TPUs). This speeds up the training process by enabling the model to process massive volumes of data in parallel.

2. Fine-tuning

Following the completion of the pre-training phase, the model is further adjusted or fine-tuned for certain tasks or domains. The model is trained using a smaller dataset that has been carefully selected and tagged by human specialists during fine-tuning. It might have been trained on a dataset of queries and responses or customer support interactions, for instance. The model becomes increasingly useful for real-world applications and is able to specialize in certain activities thanks to this process of fine-tuning.

Transformers

Large language models can handle and comprehend long-range dependencies in text thanks to a deep learning architecture called transformers. Transformers are made up of numerous layers of feed-forward neural networks and self-attention mechanisms. The model can produce coherent and contextually relevant responses by weighing the significance of various words and phrases in a given context thanks to the self-attention mechanism.

The big language model uses patterns and knowledge it has acquired throughout training to produce a response in the context of inference or text production in response to an input prompt or context. The response that is produced is frequently clear, pertinent, and human-like, but it’s vital to remember that the model lacks actual understanding or consciousness.

How are large language models used in healthcare?

Large language models have the potential to be extensively utilized in various aspects of healthcare. Here are a few examples of how they can be applied:

Clinical Documentation

Language models can help healthcare professionals create clinical documentation including progress notes, discharge summaries, and medical reports. Healthcare providers can save time by using these models to automatically evaluate patient data, extract pertinent information, and produce accurate and thorough documentation.

Medical Image Analysis

By processing the accompanying text or clinical context, the models can provide insights or assist in generating structured reports based on the image findings.

Decision Support Systems

Decision support systems can use language models to provide evidence-based recommendations for choosing a treatment plan or medicine. These models can help healthcare professionals make wise judgments, lower errors, and improve patient outcomes by analyzing enormous quantities of medical literature, clinical guidelines, and patient data.

Patient Engagement and Education

Large language models can be used to create interactive conversational agents or chatbots that can promote self-care, answer patient questions, and deliver medical information. These models are able to comprehend natural language inquiries and provide precise, individualized information in response, improving patient involvement and fostering health literacy.

Research and Literature Review

Scientists and researchers can use language models to conduct literature reviews and extract pertinent data from a large number of scientific articles. These models can help in summarizing articles, finding knowledge gaps, and speeding up the research process by comprehending the context and relationships inside research papers.

Natural Language Processing for Electronic Health Records (EHRs)

In order to extract useful insights and organized data from unstructured clinical notes and EHRs, language models might be used. These models can enable advanced analytics, population health research, and predictive modeling by transforming free-text data into structured representations, which will improve healthcare management and patient care.

It’s important to note that while large language models hold great potential, their deployment in healthcare requires careful consideration of ethical, privacy, and security concerns, as well as adherence to regulatory guidelines and data protection protocols.

How are large language models used in radiology?

Large language models have shown promising applications in radiology. Here are a few ways they are being utilized in this field:

Radiology Report Generation

Generating radiology reports is a time-consuming task for radiologists. Large language models can assist in automating this process by analyzing the radiology images and associated clinical information to generate comprehensive and structured reports. These models can extract relevant findings, provide diagnostic impressions, and offer recommendations, thereby saving time and improving efficiency.

Image Interpretation and Diagnosis Assistance

Language models can be combined with medical imaging technologies to aid in the interpretation of radiology images. By analyzing the image and accompanying text or clinical context, these models can provide insights, suggest potential diagnoses, and offer differential diagnoses. They can assist radiologists in detecting abnormalities, identifying patterns, and improving diagnostic accuracy.

Clinical Decision Support

Large language models can serve as decision support tools for radiologists. By integrating with electronic health records (EHRs) and other relevant patient data, these models can assist in treatment planning and management. They can provide evidence-based recommendations for follow-up imaging, suggest appropriate imaging protocols, and aid in selecting the most suitable interventions or procedures.

Quality Assurance and Peer Review

Language models can be used for quality assurance and peer review in radiology. They can analyze radiology reports and compare them to established guidelines or standards, identifying discrepancies or potential errors. This can help ensure the accuracy and consistency of radiology interpretations and improve the overall quality of radiology practice.

Education and Training

Large language models can play a role in radiology education and training programs. They can provide interactive and personalized learning experiences, answer questions from trainees, explain complex concepts, and offer case-based learning. This can enhance the knowledge and skills of radiology students and residents.

It’s important to note that the implementation of large language models in radiology should be done with caution and in collaboration with radiologists and other healthcare professionals. Their use should complement and augment the expertise of radiologists, rather than replace them. Additionally, considerations such as model transparency, validation against gold standards, and ethical use of patient data should be addressed when deploying these models in radiology practice.

Why do we need an interoperable medical imaging infrastructure for the effective application of large language models in radiology?

Data Integration

Medical imaging data is typically stored in various systems and formats, including Picture Archiving and Communication Systems (PACS) and Electronic Health Records (EHRs). An interoperable infrastructure enables the seamless integration and sharing of data from these disparate sources. Language models require access to comprehensive and diverse datasets to train and fine-tune their algorithms effectively. An interoperable infrastructure facilitates the aggregation of data from multiple sources, providing a more comprehensive and representative dataset for training large language models.

Data Standardization

Medical imaging data comes in different formats and standards, such as Digital Imaging and Communications in Medicine (DICOM). An interoperable infrastructure ensures the consistent interpretation and exchange of imaging data by adhering to common standards. Standardization is essential for language models to accurately interpret and analyze the imaging data, as well as associated clinical information. It enables interoperability between different imaging systems and ensures compatibility between the language models and the data sources.

Data Access and Sharing

Interoperability allows authorized healthcare providers and researchers to access and share medical imaging data across different institutions or healthcare settings. Large language models benefit from diverse and large-scale datasets to develop robust and generalizable algorithms. By enabling data access and sharing, an interoperable infrastructure fosters collaboration, facilitates research, and supports the training and evaluation of language models on a broader range of data, leading to improved performance and applicability.

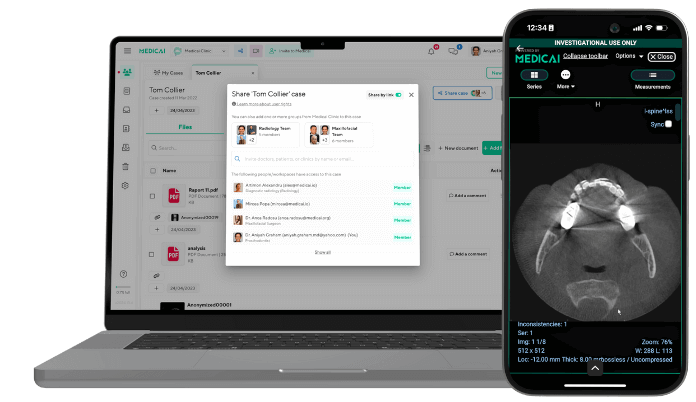

Workflow Integration

An interoperable infrastructure enables the seamless integration of language models into existing radiology workflows. Radiologists rely on efficient and streamlined processes for image interpretation, report generation, and clinical decision-making. By integrating language models into existing systems, such as PACS or reporting tools, the models can assist radiologists without disrupting their workflow. This facilitates the adoption and utilization of language models in real-world radiology practice.

Longitudinal Patient Data

Interoperability allows for the longitudinal tracking and analysis of patient imaging data over time. Language models can leverage this longitudinal data to provide more comprehensive insights and support better patient care. By accessing historical imaging studies, follow-up data, and clinical annotations, the models can assist in monitoring disease progression, treatment response, and prognosis, thereby enhancing radiologists’ decision-making capabilities.

Conclusion

An interoperable medical imaging infrastructure like Medicai’s promotes data sharing, standardization, collaboration, and workflow integration, creating an environment conducive to the effective implementation of large language models in radiology. It helps maximize the benefits of these models by leveraging comprehensive datasets and seamlessly integrating them into clinical practice, ultimately improving patient care and outcomes.

Related Articles

Lets get in touch!

Learn more about how Medicai can help you strengthen your practice and improve your patients’ experience. Ready to start your Journey?

Book A Free Demo