AI in Medical Research: How to Ensure Content Accuracy

AI made its way into Medical Research very subtly. To be very honest, this is very unsettling.

It did not happen with some major announcement or inauguration ceremony; it kept accumulating from the moment it was introduced to the system and continued to grow slowly but consistently. It started with maybe some experimental models, one or two datasets scattered here and there, and all of a sudden it was rooted deep inside the research labs, their workflow for diagnostics, pipelines for discovering drugs, and eventually started taking over what previously ran on experience built over years and hard-earned training.

We are where we are today because of that process.

Relying on systems that don’t “know” anything in the human sense, yet influence decisions that carry real consequences.

That tension matters. Because in medicine, being slightly wrong isn’t harmless. It compounds.

AI Isn’t Intelligent. It’s Pattern-Obsessed.

Call it intelligence if you want, but that’s branding.

What these systems actually do is pattern recognition at scale. Feed them clinical records, imaging data, and genomic sequences, and they start connecting dots. Sometimes faster than any human team could.

That’s where the value is. Early disease detection, accelerated drug development, predictive modeling.

But there’s a catch that doesn’t get enough attention.

AI doesn’t have the capacity to and doesn’t feel the need to question the inputs; at least, not yet.

The output comes biased if there’s bias in the data. If the dataset has gaps, the findings and outcomes will carry similar blank spots. They don’t carry any internal alarm systems that go off when something isn’t quite right.

There’s a study in Nature Medicine that highlights AI models performing inconsistently across greater populations since they were trained with datasets based on limited demographics. The failure is not caused by the maths of it; it’s because of the limitations in data.

And everything rides on that distinction.

Where Everything Starts Going Wrong

Medical research that is AI-assisted or AI-driven seldom has errors that are dramatically evident, and they are never self-explanatory. They usually slip away very subtly, being disguised as plausible but controversial conclusions.

Training Data Bias

Certain populations continue to be overrepresented in a lot of datasets to this day. They are mostly urban, western, and recorded digitally. Performance will most definitely drop if those models are applied elsewhere, and it won’t be subtle; it will be very noticeable.

Drift in Annotation

Medical labeling isn’t always clean. Radiologists disagree. Pathologists interpret differently. Those inconsistencies don’t disappear in training. They get encoded.

Overfitting

Some models perform beautifully in controlled environments. Then reality shows up. Different equipment, different patient profiles, different noise. Accuracy dips fast.

Synthetic Feedback Loops

This one is newer, and it’s growing.

AI-generated summaries and reports are starting to circulate inside research environments. If that content gets reused as training material, you get a loop. Machine outputs reinforcing machine outputs. Reality slowly diluted.

Lack of Explainability

Black-box systems are still common. They give answers without showing their reasoning. In finance, that’s risky. In medicine, it’s unacceptable.

Why Accuracy Is Not Negotiable

This is not a sector where we can accept “close enough” or “nearly there”.

- Diagnostic errors already affect millions of patients globally each year.

- AI models are being used to prioritize treatment pathways.

- Drug discovery pipelines depend on predictive accuracy to justify massive investments.

When AI gets the context wrong in any way it’s not theoretical anymore; it’s very visible, and it directly affects the output.

Building Systems That Don’t Drift

Accuracy doesn’t come from a single fix. It’s built layer by layer.

Start With the Data

There’s no workaround here.

- Use diverse, representative datasets

- Validate sources rigorously

- Continuously audit for inconsistencies

If the foundation is unstable, nothing built on top of it will hold.

Keep Humans Inside the Loop

Fully automated decision-making in medical research sounds efficient. It isn’t safe.

Researchers and clinicians need to:

- Review outputs

- Correct misinterpretations

- Feed refined data back into the system

AI works best when it’s supervised, not unleashed.

Make Models Explain Themselves

Interpretability tools like SHAP and LIME exist for a reason. They don’t simplify the model, but they expose its logic.

That visibility matters. Especially when decisions need to be justified.

Validate Constantly

Models degrade over time. Data shifts. Environments change.

Regular external validation and benchmarking against clinical standards isn’t optional. It’s maintenance.

The Content Problem No One Expected

Writing with AI was never expected to become such a critical problem in Medical Research this fast. Regardless, here we stand, facing the very issue we thought we never would.

Nowadays, people use AI to generate literature reviews, summaries for research, and sometimes even earlier versions of the drafts.

It appears very efficient superficially, but it comes with dangerous underlying risks.

Because AI-generated text can sound precise while being slightly wrong. A statistic that looks legitimate but isn’t. A citation that doesn’t exist. A conclusion that leans just a bit too far.

And once that enters the research flow, it spreads.

Midway through any serious workflow, using an AI content detector becomes less of a precaution and more of a requirement. Tools like GPTZero help identify machine-generated text so researchers can review it more critically before it’s accepted, reused, or cited.

This isn’t about rejecting AI-generated content outright. It’s about filtering it before it blends into verified knowledge.

Verification Isn’t a Step. It’s a Habit.

If there’s one place you don’t cut corners, it’s here.

Cross-Check Everything

No single source should carry weight on its own. Every claim needs to be validated against peer-reviewed research or trusted databases like PubMed.

Verify Citations Manually

AI can fabricate references. It happens. Every citation needs to be checked. Not skimmed. Checked.

Recalculate the Numbers

Statistics aren’t immune to misinterpretation.

- Sample sizes

- Confidence intervals

- P-values

When you feel that something is a little off, it most likely is.

Get Specialists to Review

Ordinary reviews are not enough and never will be. An experienced domain expert will be able to identify very subtle nuances that others will simply overlook. Hence, the role of domain experts is never optional.

Structured Systems and How They are Adapting

Many organisations have already started restructuring their workflow to take these risks into account.

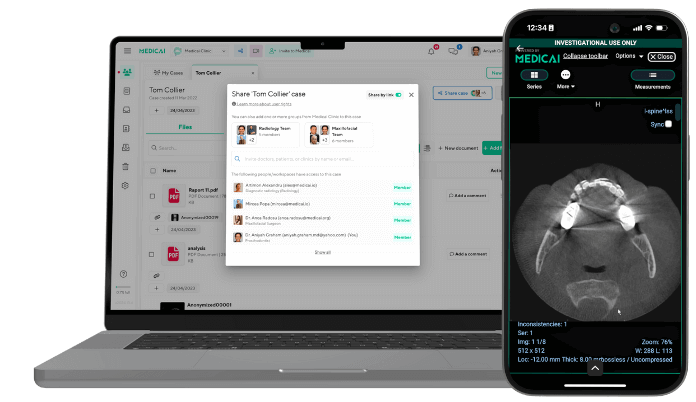

Diagnostic platforms that use AI assistance, especially in fields like medical imaging, have started directly integrating layers for validation within their existing pipelines. Whenever a drop in confidence level is found, they immediately flag the outputs. And the cases with uncertainty are now escalated for expert human review instead of being pushed forward as they used to.

There has also been a major shift in terms of traceability. The newly designed systems have a feature integrated that logs where and how much AI is contributing and that creates a trail for audit that can be later reviewed.

These upgrades are not theoretical. They noticed real-life gaps within the structure, and these are the responses and remedies to those gaps. These aren’t theoretical upgrades. They’re responses to real gaps that have already shown up.

Ethics Isn’t Separate From Accuracy

Ethics and accuracy are intertwined. They cannot coexist without being implemented simultaneously.

Bias Causes Consequences

An underperforming AI system, even if for specific populations, cannot be termed as a mere technical flaw anymore; it becomes a disparity for healthcare.

Accountability Requires Clarity

Responsibility and accountability must be absolutely precise and certain if a decision is AI-influenced. There must be a responsible individual or department that owns up to the output.

Building Trust Requires Transparency

Ethically speaking, it must be disclosed if AI was used for an analysis or research. Using AI quietly causes a gradual loss of credibility.

Where All Of It Is Going

The use of AI for any purpose in medical research is getting rooted in. It’s not spreading branches as it was expected.

Toward personalized treatment models. Real-time diagnostics. Predictive health systems that try to anticipate issues before they surface.

That direction raises the bar.

Future systems will likely have the ability to:

- Detect built-in bias

- Validate data continuously

- Make decisions with hybrid frameworks

- Oversee regulations specifically for AI generator content

At this rate, we won’t be checking accuracy at the very end. Instead, the system will consistently adapt and incorporate at every stage.

Final Word

AI can only reflect what you give it. It’s completely unable to create any truth.

The output will definitely be flawed if you don’t get the input right. But with AI, it will be much faster and definitely a lot more difficult to doubt.

For this very reason, it’s not the model’s responsibility. The people who are directly involved with building trusting and fearing the model are the ones accountable for it.

Therefore, we should validate aggressively, doubt even the cleanest of answers and clear any confusion between being correct and being confident.

Because in medical research, the aftermath of getting something wrong doesn’t just reflect on paper, it affects thousands, even millions of lives.

Related Articles

Lets get in touch!

Learn more about how Medicai can help you strengthen your practice and improve your patients’ experience. Ready to start your Journey?

Book A Free Demo