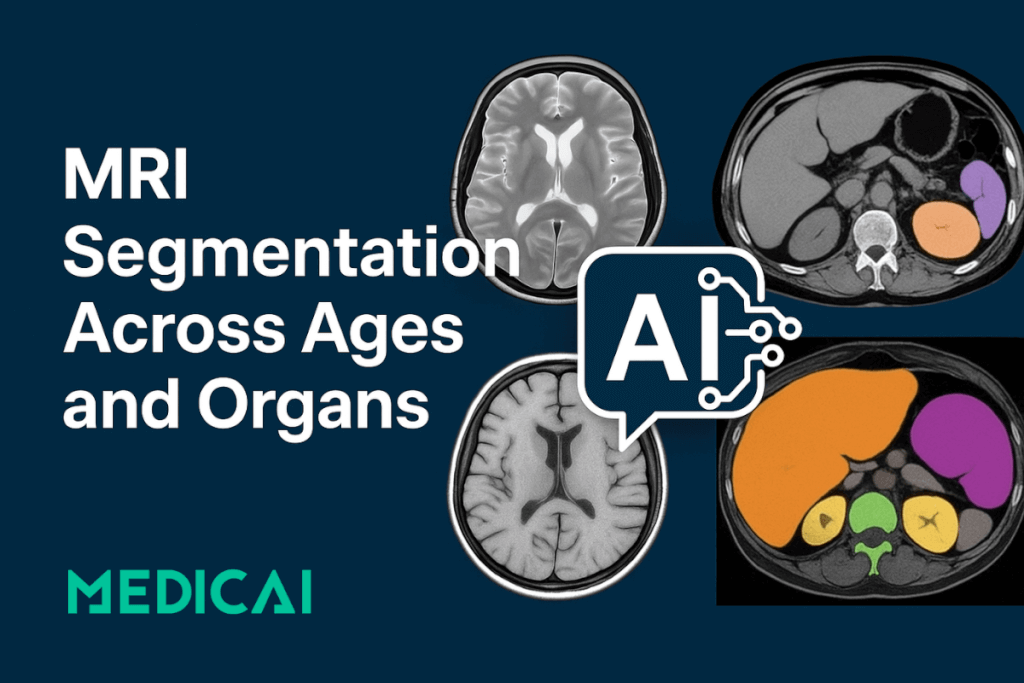

MRI Segmentation Across Ages and Organs

What if your MRI scan could do more than just show images? What if it could deliver precise measurements, highlight disease progression, and even guide treatment in minutes?

That’s the promise of modern MRI segmentation, a technology quietly transforming how radiologists and researchers interpret medical images.

MRI segmentation breaks down complex scans into clearly labeled regions, like white matter, gray matter, organs, etc. Once a tedious manual task, segmentation is now powered by deep learning and open-source tools, enabling high-speed, high-accuracy analysis across every age group and MRI sequence.

Let’s discover the MRI segmentation workflow, explore traditional and deep learning-based methods, and how these innovations transform clinical value at scale.

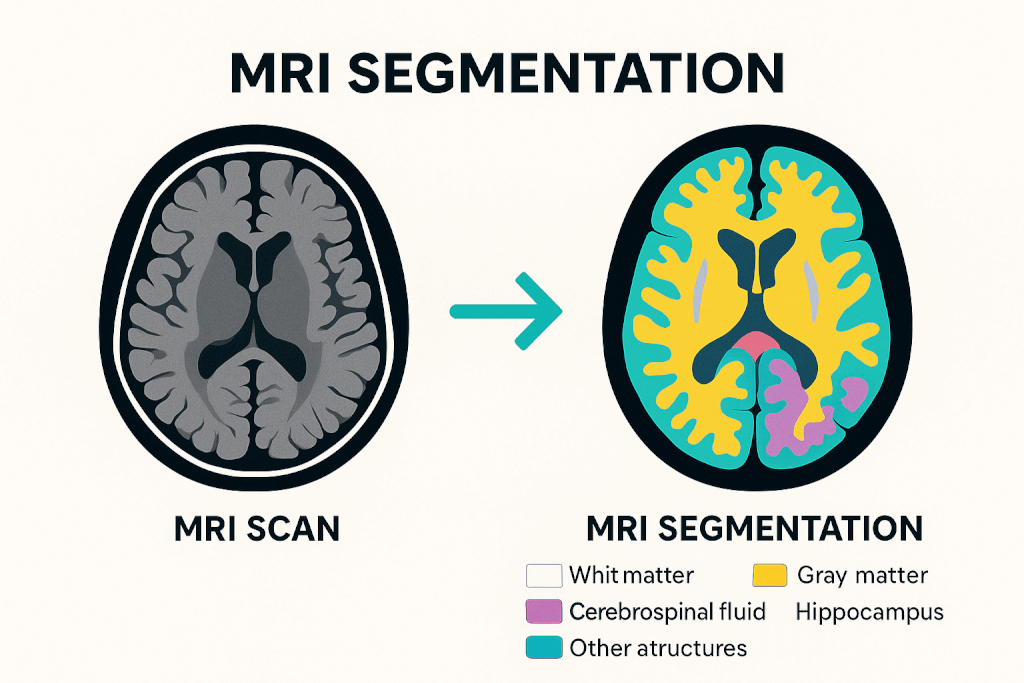

What Is MRI Segmentation, and Why Is It Essential?

MRI segmentation is about turning raw images into useful insights. It involves dividing an MRI scan into distinct regions, like white matter (WM), gray matter (GM), cerebrospinal fluid (CSF), and specific anatomical structures such as the hippocampus, thalamus, or even abdominal organs.

Each segmented area is labeled and measured, allowing clinicians and researchers to assess volume, shape, or change over time.

This process is essential in both clinical and research settings. In neurology, for example, accurate segmentation helps track brain development in children, detect lesion volume in multiple sclerosis, or measure cortical thinning in Alzheimer’s disease.

Beyond the brain, segmentation of organs like the liver, spleen, and kidneys enables volumetric analysis for surgical planning, aging studies, or disease screening.

Modern tools also enable multi-label segmentation, mapping dozens of structures in a single pass. Deep learning models like icobrain-dl and TotalSegmentator MRI now allow this to happen in minutes, with performance rivaling expert radiologists.

In short, MRI segmentation is a technical process, a gateway to quantitative medicine, helping translate images into decisions.

The MRI Segmentation Workflow: From Raw Image to Insight

Every clean segmentation output relies on a structured pipeline that converts raw MRI imaging data into labeled structures. This workflow is crucial for modern medical image analysis, enhancing speed, accuracy, and accessibility through improved automation.

Step 1: Preprocessing

The data needs cleaning before any model can make sense of an image. This step involves:

- Bias field correction to remove smooth, unwanted intensity variations caused by magnetic field inhomogeneities. Tools like N4ITK are commonly used here.

- Skull stripping (or brain extraction) to remove non-brain tissues like skin, skull, and eyes, which may interfere with results.

- Image registration involves aligning the scan to a standard brain atlas (like MNI space) so measurements are anatomically consistent across patients.

- Intensity normalization ensures that voxel values are consistent, especially with multi-center data.

Step 2: Segmentation

Next, the actual segmentation takes place. Depending on the method:

- Manual segmentation involves a radiologist outlining each region by hand, which is highly accurate but time-consuming.

- Statistical methods use intensity values and clustering algorithms (k-means or EM) to label regions.

- Deep learning models like icobrain-dl and TotalSegmentator MRI perform this step automatically. They assign labels to each voxel using neural networks trained on thousands of annotated scans.

- Advanced models can now segment up to 80 structures across body regions and MRI sequences in a single scan.

Step 3: Post-processing and Quantification

After segmentation, the final step is turning labeled regions into quantitative insights:

- Volume calculations, used to assess brain atrophy or organ size changes

- Shape analysis for identifying structural abnormalities

- Integration with clinical platforms like PACS of Medicai, or exporting for research use

In tools like icobrain-dl, this happens in under five minutes, even on standard CPU systems, making it viable for clinical workflows.

Traditional Techniques: The Manual and Model-Based Foundations

MRI segmentation originally depended on manual tracing and mathematical models, which set the foundation for modern tools and are still used in some research and clinical settings.

Manual Segmentation

This is the most straightforward and labor-intensive method. A trained radiologist or technician manually outlines each region of interest (ROI), slice by slice. While considered the “gold standard” for accuracy, it’s not scalable, and results can vary between operators.

Intensity-Based Methods

These approaches use voxel intensity to classify tissues:

- Thresholding separates regions based on pixel brightness—simple but sensitive to noise.

- Region growing expands a segment from a seed point based on similar intensity values.

- Classification algorithms, like Bayesian models or k-nearest neighbors, assign voxels to tissue types based on statistical properties.

Clustering Techniques

Unsupervised methods like k-means, Fuzzy C-Means (FCM), and Expectation Maximization (EM) group voxels with similar intensities. They’re faster than manual work but struggle with intensity overlap and noise in MRI data.

Atlas-Based Segmentation

A probabilistic brain atlas (often built from healthy subjects) is registered to the patient’s scan. Labels from the atlas are transferred to the image. It’s useful, but less reliable in cases involving anatomical abnormalities or pediatric brains.

Surface and Contour Models

Also known as deformable models, these methods trace boundaries based on image gradients. Active contours and level sets are examples that respond to both shape and edge information.

They are great for capturing object boundaries, but slow and sensitive to initialization.

AI and Deep Learning in MRI Segmentation: Precision at Scale

In recent years, deep learning has redefined what’s possible in MRI segmentation. Unlike traditional methods, which rely on handcrafted rules or statistical assumptions, deep learning models learn patterns directly from thousands of labeled scans.

The result?

Faster, more accurate, and more generalizable segmentation, especially across patient age, anatomy, and imaging variability.

How Deep Learning Works in Segmentation?

The U-Net architecture is the backbone of most modern segmentation tools. A convolutional neural network (CNN) processes 3D image volumes, learning to classify each voxel based on local features and global context.

Enhancements like 3D U-Nets, multi-head outputs, and spatial attention have made these models even more powerful for brain and body segmentation.

For example, icobrain-dl, developed to support lifespan-wide MRI analysis, is a deep learning pipeline trained on patients aged 2 to 90. Using a dual-task neural network, it segments brain tissues (WM, GM, CSF) and 22 anatomical structures.

The system achieved over 82% Dice scores and was validated across diverse data for adults and children, outperforming tools like ChildMetrix and FreeSurfer in speed and reproducibility, while running fully on CPU in under five minutes. [Simarro et al., 2024]

icobrain-dl is used in real-world scenarios, from detecting Alzheimer’s disease to screening children for Cerebral Visual Impairment (CVI).

Why Deep Learning Wins?

AI segmentation models offer a rare combination: clinical-grade precision and real-time usability. They:

- Handle noisy or incomplete data better

- Adapt across scanner types and institutions

- Deliver consistent, reproducible results without human bias

- Scale for large datasets or time-sensitive environments

And with tools like icobrain-dl, this power is becoming increasingly accessible to radiology departments, research labs, and even cloud-based health platforms.

Clinical Use Cases and Diagnostic Value

MRI segmentation delivers real clinical impact by transforming images into measurable data that supports diagnosis, treatment, and research. Today’s AI models for MRI are driving this shift across specialties:

- Alzheimer’s disease: Tools like icobrain-dl quantify cortical atrophy, enabling early diagnosis and monitoring of disease progression over time.

- Cerebral Visual Impairment (CVI) in children: Deep learning models reveal white matter loss patterns that often go undetected in manual reviews, improving early intervention strategies.

- Whole-body organ segmentation: TotalSegmentator MRI segments organs like the liver, spleen, and kidneys for use in volumetry, aging studies, and opportunistic screening.

- Research and clinical scalability: These models are already used in both real-world clinical workflows and large-scale datasets, bridging the gap between bench and bedside.

Conclusion

MRI segmentation has advanced from manual outlines to AI models that deliver fast, accurate, and scalable insights. Tools like icobrain-dl and TotalSegmentator MRI make it easier to analyze complex anatomy across all ages and imaging protocols.

As these technologies continue to evolve, they improve workflow and reshape clinical care. The future of imaging is here, and it’s smarter, faster, and more accessible than ever.

Related Articles

Lets get in touch!

Learn more about how Medicai can help you strengthen your practice and improve your patients’ experience. Ready to start your Journey?

Book A Free Demo