Structured Radiology Reporting: Templates, Best Practices, and AI-Assisted Workflows

Radiology reporting is the last clinical step in every imaging workflow — and in most practices, it is the least systematized. Radiologists dictate in natural language that varies by individual, modality, case complexity, and fatigue level. Referring clinicians receive reports that may or may not include the clinical indication, may or may not quantify the key finding, and may or may not flag urgency in a predictable location. Billing teams code from report text that was never written to support accurate charge capture. And quality audits, when they happen at all, rely on manual review of free-text documents that resist systematic comparison.

Structured radiology reporting addresses all of these problems through a single architectural change: replacing free-text dictation with template-driven workflows where the report’s sections, mandatory fields, and measurement requirements are defined before the radiologist opens the study. The result is not a more bureaucratic report — it is a more useful one. The ACR published guidelines in 2014 recommending structured reporting as the standard for diagnostic radiology, citing evidence that structured reports improve clinician satisfaction with report clarity, reduce telephone calls from referring physicians seeking clarification, and enable the extraction of quantitative data for quality improvement programs. More than a decade later, adoption remains uneven — practices that have implemented structured reporting consistently, with proper template design and PACS integration, achieve reductions in turnaround time and measurable improvements in report completeness. Practices that have implemented it poorly — with generic templates that do not match their study mix, or with reporting platforms that operate separately from the PACS viewer — often revert to free-text dictation within months.

This guide covers the complete implementation picture: how to design effective templates, what each mandatory report section must contain and why, how AI-assisted dictation and NLP change the workflow without replacing radiologist judgment, and how PACS integration determines whether structured reporting is adopted or abandoned. For practices evaluating dedicated structured reporting software that integrates with cloud PACS, Medicai’s structured reporting for radiology module is covered in the evaluation section.

What Structured Radiology Reporting Is — and What It Changes

Structured radiology reporting is a methodology for producing radiology reports in which the report format — section headings, mandatory data fields, measurement requirements, and finding classifications — is defined by a template before the radiologist begins interpreting the study. Rather than starting from a blank dictation field and composing an entire report from memory, the radiologist works through a structured template that prompts for each required element: clinical indication, technique, comparison studies, findings by anatomical region, quantitative measurements, impression, and follow-up recommendation. The output is a report with a consistent structure, consistent terminology, and consistent placement of key information that downstream users — referring clinicians, billing teams, quality auditors, and AI systems — can rely on finding in the same location every time.

What changes for radiologists?

Structured reporting shifts the cognitive demand of report generation. In free-text dictation, the radiologist simultaneously manages what to say and how to format it — deciding in real time which findings to include, in what order, with what level of detail. In structured reporting, the template handles the formatting decisions. The radiologist’s attention concentrates on clinical interpretation: what this finding means, how it compares to prior studies, what classification it warrants, and what follow-up it requires. For high-volume reporting environments — teleradiology operations, high-throughput outpatient imaging centers — this shift reduces the cognitive load that accumulates during long reading sessions and is associated with late-session reporting errors.

What changes for referring clinicians?

The referring physician receiving a structured report finds the impression in the same location regardless of which radiologist produced it, can immediately identify whether urgent action is required from a clearly labeled urgency classification, and can extract quantitative findings — tumor longest diameter, lymph node short-axis measurement, ejection fraction — from a standardized measurement field rather than parsing them from a dictated sentence. A 2014 study published in the Journal of the American College of Radiology found that structured reports reduced referring clinician misinterpretation of radiology findings by 28% compared to free-text reports, and reduced telephone calls requesting report clarification by 19%. The mechanism is simple: when the same information is always in the same place, it is found reliably rather than searched for.

What changes for operations?

Structured reporting enables operational capabilities that free-text reporting cannot support without natural language processing. Automated charge capture: when the report template maps to CPT codes by body part, modality, and complexity level, the system proposes the correct billing code at sign-off rather than requiring a coder to infer it from free text. Quality metrics: turnaround time by template type, mandatory field completion rate, critical result notification compliance, and finding classification distribution can all be extracted from structured report data without manual abstraction. Population health analytics: lesion size distributions, follow-up recommendation rates, and incidental finding prevalence can be measured across the imaging population, enabling risk stratification and care gap identification that is impossible with free-text reports.

Structured Reporting: A Key to Reducing Burnout and Enhancing Patient Care

Radiologists in North America are grappling with an ever-increasing workload. Their roles have expanded significantly from interpreting complex imaging studies to managing administrative tasks.

This multifaceted demand, compounded by staffing shortages and a growing volume of advanced imaging studies, contributes to around 49% burnout in the profession.

Burnout, characterized by emotional exhaustion and reduced efficiency, impacts radiologists’ well-being and can compromise the quality of patient care.

Impacts of Structured Reporting on Radiologists

Structured reporting enhances efficiency and reduces radiologists’ cognitive load by standardizing how diagnostic information is documented and shared.

Unlike traditional free-text reports, structured reports use pre-defined templates that ensure consistency, accuracy, and clarity.

For radiologists, this means faster report generation, fewer ambiguities, and reduced time spent on repetitive tasks.

Studies have shown that structured reporting can significantly improve turnaround times for imaging studies, allowing radiologists to focus more on critical diagnostic tasks and patient interactions.

Enhancing Patient Care

Structured reporting also benefits healthcare facilities by improving communication among care teams. Clear, standardized reports reduce the risk of misinterpretation, facilitating timely and accurate treatment decisions. In turn, this enhances patient outcomes and boosts the reputation of healthcare facilities.

Adopting structured reporting aligns with broader operational efficiency and quality improvement goals for healthcare executives. With streamlined workflows and better resource utilization, it becomes possible to address staff burnout while ensuring high-quality care delivery.

The Anatomy of a Structured Radiology Report

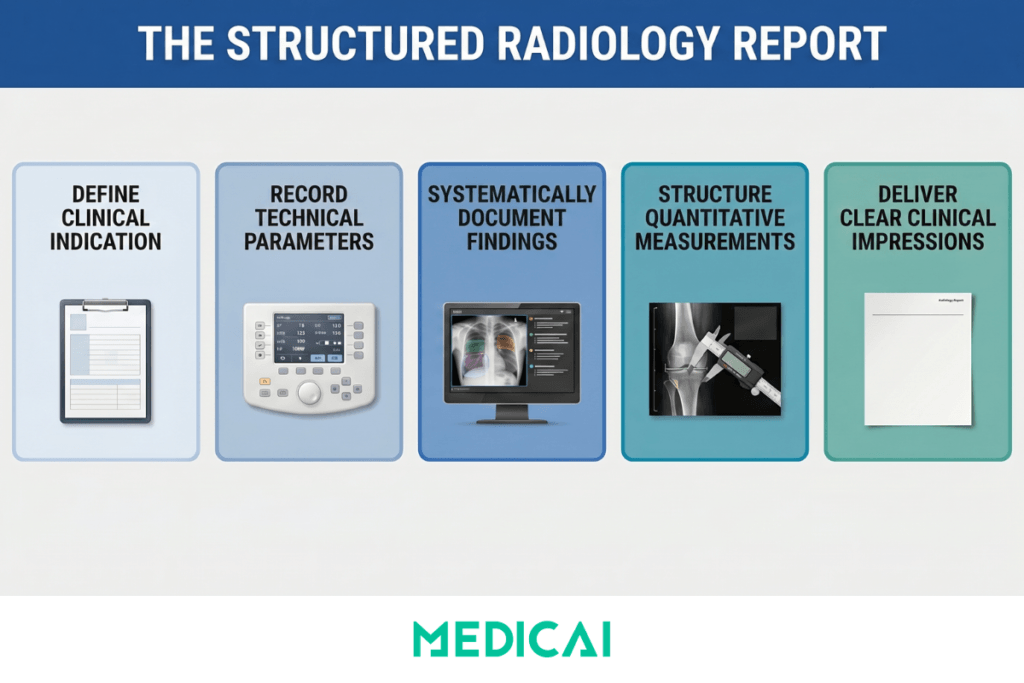

A well-structured radiology report has six mandatory sections. Each section has a specific function in the clinical and operational workflow, and omitting any of them results in an incomplete report for at least one downstream use case.

Clinical indication

The reason the study was ordered was reproduced from the imaging order and verified by the radiologist before interpretation began. The indication must include the specific clinical question being asked — not just a diagnosis code, but the concern the ordering physician needs the imaging to address. “Evaluate for pulmonary embolism in a patient with acute-onset dyspnoea, pleuritic chest pain, and elevated D-dimer” is a clinical indication that frames the interpretation task. “Chest pain” or “shortness of breath” is a symptom label that provides no interpretive context. Templates should automatically extract the indication from the RIS order, so the radiologist can verify rather than retyping the manual re-entry step that introduces transcription errors and delays the reporting workflow.

Technique

The acquisition parameters — modality, MRI field strength, CT tube voltage and current, contrast agent and dose if administered, scan range, patient positioning, and any protocol deviations from the department standard. Technique documentation serves three functions: it establishes medico-legal defensibility by recording what the radiologist was actually asked to interpret, it enables meaningful comparison with prior studies acquired under different protocols, and it provides the technical context that referring clinicians and specialists need to evaluate whether the study was adequate for the clinical question being asked. Templates should include technique as a semi-structured field with modality-specific subfields, with the option to auto-populate from DICOM header metadata when acquisition parameters are reliably captured.

Comparison studies

Prior imaging was reviewed alongside the current study, with study date, modality, and facility. Documenting comparisons is mandatory for two reasons. Clinically, it establishes that the radiologist has reviewed prior studies before issuing the current report—a requirement in most jurisdictions. Legally, the absence of a prior that was available and not reviewed is a significant medico-legal exposure in cases where a finding that was present on the prior is missed or understaged on the current study. Templates should make the comparison field mandatory and pre-populate it with imaging history from the PACS archive, so the radiologist can select from available priors rather than typing them from memory.

Findings

The structured core of the report — systematic coverage of relevant anatomical regions with explicit documentation of both normal and abnormal findings. The findings section is where structured reporting delivers the greatest clinical quality improvement. Rather than a narrative paragraph mixing observations in the order the radiologist noticed them, a structured findings section covers each anatomical region according to a defined template: in a chest CT report, lung parenchyma, pleura, mediastinum, heart and pericardium, thoracic aorta, oesophagus, chest wall, visualized upper abdomen, and bones. Each region receives an explicit finding — either a documented normal observation or a characterized abnormal finding with relevant measurements and classification. The systematic coverage ensures that normal findings are explicitly recorded as normal rather than omitted — the omission of a region in a free-text report is ambiguous: did the radiologist find it normal, or did they not look at it?

Quantitative measurements

Any measured value that is clinically meaningful for the study type must appear in a structured numeric field — not embedded in dictated text. Lesion longest diameter and perpendicular short axis, lymph node short-axis diameter, aortic root and ascending aorta dimensions, left ventricular ejection fraction, or any measurement that will be compared with prior or future studies must be structured data rather than text. The reason is not primarily workflow — a measurement takes the same time to enter whether it goes into a structured field or is dictated into text. The reason is downstream use: structured measurements are extractable for trending, comparable across serial studies without manual abstraction, and automatically flaggable when they cross clinical decision thresholds. A structured measurement field that records a 2.3 cm lung nodule can trigger an automatic follow-up recommendation flag and an alert to the ordering clinician. The same measurement buried in dictated text cannot.

Impression

The radiologist’s clinical conclusion is the most important section of the report for the referring clinician. The ACR recommends that the impression be numbered when there are multiple findings, ordered by clinical significance with the most important finding first, written in plain language that does not require radiology training to understand, and include a follow-up recommendation with a specific timeframe when clinically indicated. Templates should require a minimum of one impression item before sign-off, provide an urgency classification field (emergent, urgent, routine, no follow-up required) that can drive alert routing, and include a standardised follow-up recommendation vocabulary where clinically relevant — “recommend CT chest in 3 months for nodule surveillance” rather than “clinical correlation recommended,” which is not an actionable recommendation.

Building Effective Radiology Report Templates

Template quality is the primary determinant of whether a structured reporting program improves workflow or creates friction. A well-designed template reduces the time and cognitive effort required to produce a complete report. A poorly designed template — too generic, too long, or poorly aligned with the study mix it is meant to serve — increases reporting time and drives radiologists to bypass the structured fields in favor of free-text dictation, thereby defeating the program’s purpose.

Six design principles that separate effective templates from counterproductive ones

- Templates must be modality and body-part-specific. A chest X-ray template and a chest CT template serve different clinical purposes and require different finding sections — the chest CT template needs a pleura section, a mediastinum section, and a bones section that a chest X-ray template may handle more briefly. A CT pulmonary angiography template needs a PE segmentation section (central, lobar, segmental, subsegmental; presence of right heart strain) that neither a standard chest X-ray nor a standard chest CT template requires. Practices that use a single “chest” template for all chest study types produce reports that are either too sparse for complex CT studies or too cumbersome for simple X-rays.

- Mandatory fields must be genuinely mandatory — enforced as hard workflow gates, not advisory prompts. If the reporting platform allows sign-off on a chest CT report without completing the lymph node assessment field, the mandatory designation is meaningless. The platform must prevent sign-off until all mandatory fields contain at least a documented value — whether a normal observation, a measurement, or a classification. Soft prompts that the radiologist dismisses with a single click do not produce structured data; they produce the illusion of structured reporting.

- Templates must use standardized terminology aligned with specialty society guidelines. The ACR’s Reporting and Data Systems (RADS) family — BI-RADS for breast, LI-RADS for liver, Lung-RADS for pulmonary nodules, PI-RADS for prostate, TIRADS for thyroid, O-RADS for ovarian — provides validated classification systems with standardized terminology that maps directly to clinical management pathways and payer coverage determinations. A template that uses non-standard terminology for a finding category creates downstream coding and coverage problems: a “suspicious” thyroid nodule reported without TIRADS classification may not trigger the appropriate clinical management pathway, and a lung nodule without a Lung-RADS category may not meet the surveillance CT follow-up coverage requirements.

- Classification systems must be incorporated as structured fields within the relevant templates. The TIRADS category for a thyroid nodule, the BI-RADS assessment for a breast lesion, the Lung-RADS category for a lung nodule, the Bosniak classification for a renal cyst — these classifications must appear as discrete selectable fields, not as text that the radiologist dictates and a coder or analyst later extracts. When the classification is a structured field, it is immediately available for clinical decision support, quality auditing, and population analytics. When it is buried in dictated text, it is accessible only after NLP processing that may introduce extraction errors.

- Templates must be built collaboratively with the referring clinical teams who use the reports. A structured reporting template designed solely for radiologist convenience — minimizing the fields the radiologist must complete — may omit information that the referring specialty depends on finding in a specific location. A neurology team that regularly orders brain MRI for MS follow-up needs the structured FLAIR lesion burden assessment that a general radiology template may not include. An oncology team that relies on structured imaging to trigger RECIST-based treatment decisions needs the target lesion table that a general abdomen CT template may not provide. Template design workshops that include the primary referring departments are not a process luxury — they are what determines whether the structured report serves the clinical decision that ordered the imaging.

- Templates must be version-controlled. When a classification system updates — Lung-RADS 2022 modified the category definitions from Lung-RADS 2019 — the template that incorporates the classification must be updated, and historical reports generated under the prior template version must remain interpretable. Reporting platforms that do not maintain version history create audit problems: a quality review comparing nodule management rates across two years may be comparing data from two different classification systems without the reviewer knowing it.

The RadReport library as a starting framework. The RSNA’s RadReport initiative, available at radreport.org, provides a publicly accessible library of structured radiology reporting templates covering more than 90 study types, developed collaboratively with specialty societies and coded in the RSNA Reporting Initiative XML schema. For radiology practices building structured reporting from scratch, the RadReport library provides validated starting templates that have been reviewed against specialty society guidelines and can be imported into compatible reporting platforms. Starting from RadReport templates and adapting them to the practice’s specific study mix and clinical context is significantly faster and lower-risk than designing templates from scratch, and produces templates that are defensible against guideline-alignment audits.

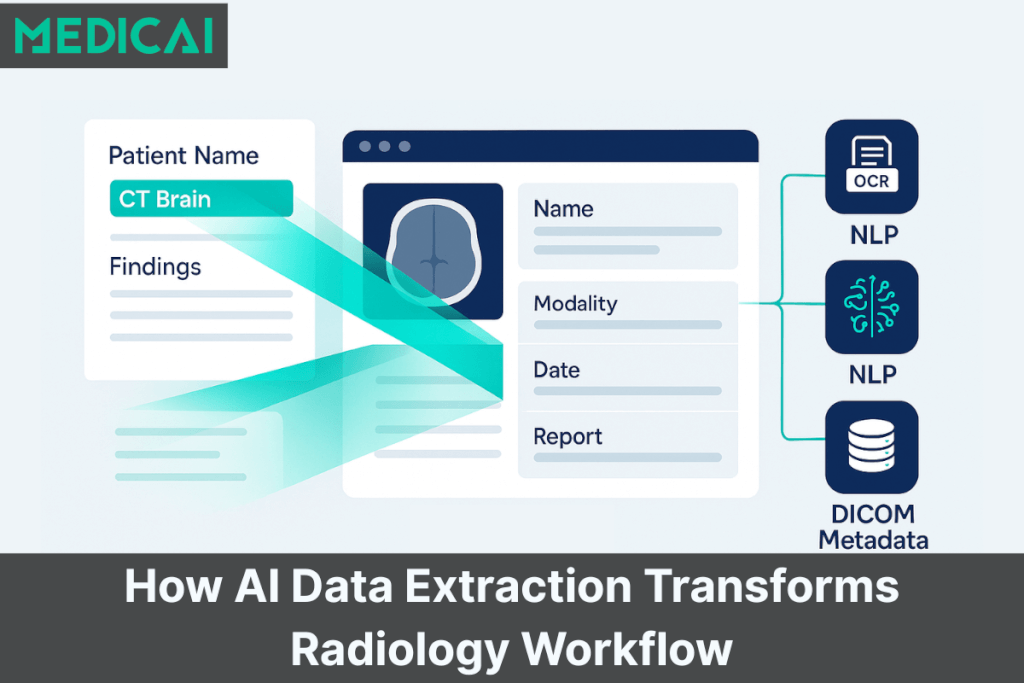

AI-Assisted Radiology Reporting — What It Actually Does

Artificial intelligence in radiology reporting is frequently discussed as though it were a single technology. In practice, AI operates at three distinct levels in the reporting workflow, and the value and risk profiles of each differ significantly. Conflating them — as vendor marketing frequently does — leads practices to either over-invest in capabilities they do not need or dismiss capabilities that would genuinely improve their workflow.

- Level 1 — AI-optimized speech recognition. The most widely deployed AI in radiology reporting is automatic speech recognition (ASR) trained on radiology dictation audio. Unlike general-purpose speech recognition, radiology-optimized ASR understands the specific vocabulary of medical imaging: it correctly transcribes “hilar adenopathy,” “peribronchovascular” as a single compound word, “T2 hyperintense” without confusion, and measurement values dictated at speed (“point three centimeters” transcribing as “0.3 cm” in the report). The dominant products in this category — Nuance PowerScribe (acquired by Microsoft, now integrated into Dragon Medical), M*Modal Fluency for Imaging, and cloud-native alternatives including those built into integrated PACS-reporting platforms — reduce the keystroke burden of report generation while producing a dictated text draft that the radiologist edits and signs rather than types from scratch. In high-volume reporting environments, the time savings from accurate speech recognition are the most consistently realized AI benefit in radiology reporting today.

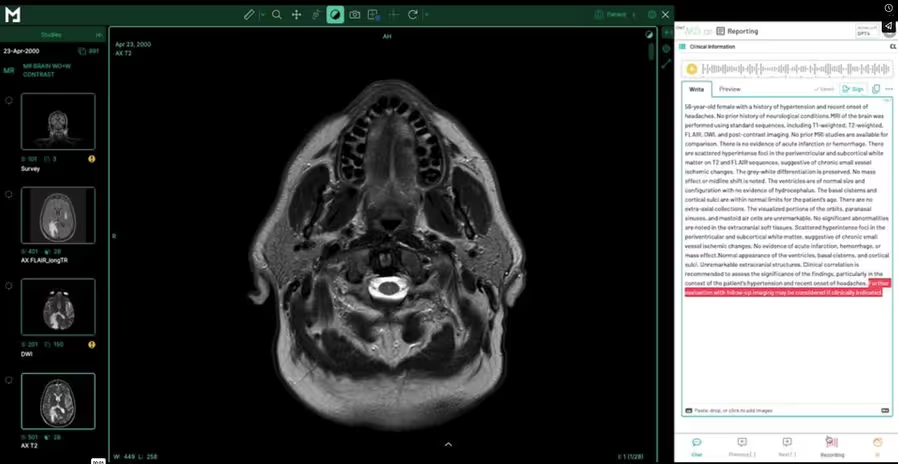

- Level 2 — AI-assisted template population and NLP extraction. The next level applies natural language processing to the dictated or transcribed text and automatically extracts structured data to populate template fields. The radiologist dictates “3.2 cm spiculated left lower lobe nodule adjacent to the major fissure,” and the system extracts the measurement (3.2 cm), the morphology descriptor (spiculated), the location (left lower lobe, adjacent to major fissure), and proposes a Lung-RADS category based on the extracted size and morphology. The radiologist confirms or modifies the extracted values — the template fields are populated, the classification is proposed, and the radiologist’s role is verification rather than data entry. Rad AI’s Impressions product operates at this level for the impression section specifically — it generates a draft impression from the finding text using a large language model, which the radiologist reviews and edits. The net time saving depends on extraction accuracy: a high-accuracy extraction that requires only confirmation is faster than manual entry; an inaccurate extraction that requires correction may not be.

- Level 3 — AI-generated draft reports. The most advanced current AI reporting tools generate a complete draft report — including both findings and impression — from image analysis or from radiologist dictation of initial findings. This is the level at which the most significant accuracy concerns arise, because generated text that is plausible but clinically inaccurate — a hallucination in the AI sense — must be caught and corrected by the radiologist before sign-off. The clinical and medico-legal responsibility for the content of a signed radiology report rests with the radiologist, regardless of how the draft was generated. Practices deploying Level 3 AI reporting tools must establish a clear review protocol that does not allow the efficiency pressure of AI-generated drafts to compress the time radiologists spend verifying accuracy against the actual images.

What AI does not do. AI-assisted reporting tools at any level do not replace the clinical judgment that is the core function of radiology. They do not decide what a finding means, whether it is clinically significant, how it compares to prior studies in a longitudinal context, or what follow-up is appropriate. They do not eliminate the need for structured templates — AI draft quality improves significantly when the output is constrained by template structure, because the template provides the output schema that guides generation. And AI extraction tools do not solve template design problems: a poorly designed template that does not prompt for a finding in a systematic location will not be improved by AI extraction from a dictated text that may or may not mention the finding.

The integrated AI-assisted structured reporting workflow. The highest-value combination applies all three AI levels in sequence within a single integrated workflow: a structured template defines the required sections and mandatory fields, AI-optimized speech recognition populates the template fields from dictation without keyboard entry, and AI-assisted NLP extracts structured measurements and proposes classification system categories from the dictated text. The radiologist reviews the populated template, confirms or modifies the AI-extracted values, adds the clinical interpretation and follow-up recommendation reflecting their judgment for the specific patient, and signs the completed structured report. The template ensures completeness; the AI reduces the mechanical burden; the radiologist provides the clinical intelligence that neither template design nor AI generation can substitute for.

Integrating Structured Reporting with PACS and RIS

The most common reason structured reporting programs fail after launch is not template quality and not radiologist resistance — it is poor integration with the PACS viewing environment. When the radiologist must switch between a PACS viewer and a separate reporting application to complete a structured report, the additional workflow step creates friction that accumulates across hundreds of studies per week. In a reading room where productivity is measured in studies per hour, any workflow addition that does not save time elsewhere is experienced as a net negative — and will be circumvented.

The synchronized viewer-reporter workflow. The correct PACS integration for structured reporting means that when the radiologist opens a study from the reading worklist, the structured report template for that study type opens simultaneously in a synchronized panel alongside the image viewer — study viewer on the left, report template on the right, in a single application. Measurements the radiologist makes in the viewer — a linear measurement of a lung nodule, an area measurement of a hepatic lesion, a volume measurement from a segmentation tool — automatically populate the corresponding measurement fields in the open report template. Annotations placed on the image link to the report findings section that describes the annotated region. The radiologist never leaves the viewer to switch to a separate application, nor does the radiologist manually transcribe a measurement from the viewer to a text field.

This integration requires the PACS viewer and the reporting platform to share a common data layer. The measurement data made in the viewer must be exported as structured DICOM SR (Structured Report) objects and imported into the reporting template in real time. This is technically achievable when the viewer and reporting platform are from the same vendor or when the reporting platform implements the IHE Management of Radiology Report Templates (MRRT) profile with full DICOM SR support. When the viewer and reporting platform are separate products from different vendors, the integration is frequently incomplete — measurements are not automatically transferred, requiring manual re-entry that eliminates the most significant efficiency benefit of structured reporting, and that introduces measurement transcription errors. Evaluating the quality of the PACS-reporter integration is the most important due diligence step in selecting a structured reporting platform.

RIS integration: pre-populated indication and automated sign-off routing

The clinical indication in the structured report must be derived from the imaging order in the RIS, not retyped by the radiologist. This requires an HL7 ORM integration between the RIS and the reporting platform so that the study’s order data — patient identity, clinical indication, ordering physician, relevant clinical history — is available in the report template before the radiologist opens it. When the radiologist signs the report, the reporting platform sends an HL7 ORU message back to the RIS, updating the study status from “read” to “finalized” and triggering the billing workflow from the signed template data. In modern cloud-native environments that support HL7 FHIR, the RIS integration can be bidirectional in real time — the reporting platform can query the current clinical context from the FHIR server rather than waiting for a pushed HL7 message, and the FHIR DiagnosticReport resource can be written directly to the EHR from the signed template.

EHR distribution and critical result management

The signed structured report must reach the ordering physician’s EHR in a format the EHR can use — not as a PDF attachment that is stored but not parsed, but as structured content that the EHR can display natively and route appropriately. For critical results — findings that require immediate clinical action — the reporting platform must support a notification workflow that alerts the ordering clinician through a channel that generates a confirmation receipt: a documented telephone contact log, a direct EHR alert with acknowledgment requirement, or a two-way messaging system where the clinician’s receipt is recorded in the reporting system. The ACR Practice Parameters for Communication of Diagnostic Imaging Findings require that critical results be communicated directly to the responsible provider and documented. Structured reporting platforms that log the critical result notification within the report record, with a timestamp and recipient confirmation, provide the audit trail required to satisfy this requirement.

Measuring the Impact of Structured Reporting

A structured reporting program without systematic measurement is a process change without accountability. The following six metrics track whether the program is delivering the outcomes it was implemented to achieve — and, critically, identify where it is failing before failure becomes irreversible.

- Report turnaround time by template type. Time from study acquisition to signed report, segmented by study type and template. The baseline turnaround time for each study type must be measured before implementation, so that the impact of structured reporting can be isolated from seasonal or staffing changes that affect turnaround time independently. Turnaround time should decrease with structured reporting because template-guided workflows reduce report composition time. If turnaround time increases for a specific template type after implementation, the template is too complex, the mandatory field set is misaligned with the study, or the PACS integration is creating friction that outweighs the template efficiency gain.

- Mandatory field completion rate. The percentage of signed reports in which all mandatory fields contain a documented value. A completion rate below 98% indicates either that the mandatory field enforcement is not functioning as a hard gate in the platform configuration, or that specific mandatory fields are regularly problematic — a finding section that does not apply to a subset of study types is being bypassed because the template does not accommodate that subset. Monthly mandatory field completion reporting by field and by template identifies which fields are regularly incomplete and whether the problem is a platform configuration issue or a template design issue.

- Structured data extraction accuracy for AI-assisted population. For practices using NLP-assisted template population, the percentage of AI-extracted values — measurements, classification proposals, finding characterizations — that the radiologist accepts without modification. A high acceptance rate (85–90%) indicates that AI extraction is accurate and that the efficiency gains from AI assistance are being realized. A low acceptance rate indicates that radiologists are spending more time correcting AI output than the AI assistance saves — and that the NLP model may require retraining to the practice’s specific dictation style or study mix.

- Critical result notification compliance rate. The percentage of urgent and emergent findings that are documented in the reporting system with a completed critical result notification log — including the identity of the clinician reached, the communication method, and the timestamp. This metric is auditable from structured report data, unlike free-text reports. Practices that have not measured this rate before the structured reporting implementation are frequently surprised by how variable their baseline compliance with critical result documentation is.

- Referring clinician report clarity rating. Structured reporting primarily benefits the clinicians who receive the reports. Systematic collection of referring clinician feedback — through a quarterly survey, direct communication with the primary referring departments, or tracking the rate of telephone calls requesting report clarification — provides external validation that the report format is serving the clinical decision that ordered the imaging. The telecom clarification call rate is particularly actionable: if a specific template type generates a disproportionate number of clarification calls, the impression section or the finding characterization language in that template needs revision.

- Charge capture accuracy rate. For practices using automated charge capture from structured template data, the percentage of studies where the system-proposed CPT code matches the code that would have been manually assigned by a certified radiology coder. Improvement in this rate relative to the pre-structured-reporting baseline quantifies the program’s impact on billing quality and identifies templates where the CPT mapping logic requires refinement.

Evaluating Structured Reporting Software

The `radiology reporting software` market includes products at significantly different capability levels, price points, and integration depths. The correct evaluation framework for a radiology practice depends on whether the primary need is voice dictation, structured template workflow, AI-assisted report generation, or full integration with an existing PACS platform. These are different products serving different parts of the same workflow.

Template library breadth and specialty coverage. The platform should include a pre-built template library covering the practice’s primary study types — chest, abdomen and pelvis, MSK, neuro, breast — with templates that incorporate the relevant ACR RADS classification systems as structured fields. Practices should not need to build templates from scratch for standard study types. The relevant question is not “how many templates are available” but “do the available templates include the specific classification systems — BI-RADS, LI-RADS, Lung-RADS, TIRADS, PI-RADS — as structured fields, or as text guidance only?”

PACS viewer integration quality. This is the single most important practical differentiator between structured reporting platforms for practices that perform diagnostic reading. The evaluation questions are specific: Does the viewer and reporter open in synchronized panels within a single application, or does the radiologist switch between two applications? Do measurements made in the viewer automatically populate the report template measurement fields, or does the radiologist transcribe them manually? Does the integration cover all modalities in the practice’s study mix, or does it work only for specific modality types? Request a workflow demonstration with the actual modality types and study complexity levels the practice handles — not with a curated demo dataset.

AI dictation accuracy with the practice’s vocabulary. Speech recognition accuracy is highly individual — it varies by individual voice characteristics, accent, speaking pace, and the specific vocabulary of the subspecialty. A system that achieves 97% accuracy in a vendor demo with a trained reader may achieve 85% accuracy with a radiologist whose dictation pattern differs. Before committing to a platform, arrange for the radiologists who will use the system to run a pilot with their own study types and dictation style. The accuracy difference between 95% and 85% recognition rates translates into a significant difference in editing burden across hundreds of reports per week.

Critical result workflow with documented audit trail. The platform must support a critical result notification workflow that records the notification in the report record — clinician contacted, communication method, timestamp, and confirmation of receipt. This is not a premium feature for well-resourced practices; it is a regulatory requirement in most jurisdictions and a medico-legal necessity for any practice.

Where the major platforms sit:

Nuance PowerScribe (now Microsoft Dragon Medical One for Radiology) holds the largest installed base in the US market. It is an enterprise-grade dictation and structured reporting platform designed for hospital-scale radiology departments. Strong AI dictation accuracy, good structured reporting capabilities, and deep RIS integration. Pricing and implementation complexity are appropriate for hospitals, not for small practices or teleradiology operations that need a cloud-native solution without an on-site implementation engagement.

Rad AI is the fastest-growing AI-first reporting platform in the US market. Its Impressions product generates AI-drafted impression sections from radiologist findings, and its Omni Reporting product covers the full reporting workflow. Strong AI draft generation, primarily in the US teleradiology and high-volume outpatient market. Less focus on template-driven structured fields compared to measurement-based platforms.

RadioReport is a European-origin structured reporting platform with an extensive template library covering more than 150 study types and a strong incorporation of classification systems. Built specifically around structured template workflows rather than AI draft generation. Well-suited for practices that prioritize template completeness over AI dictation speed.

rScriptor is a voice dictation-first platform with a US market focus. Strong in dictation workflow, weaker in structured template enforcement and PACS integration compared to fully integrated platforms.

AbbaDox integrates RIS, worklist management, dictation, and report distribution in a single platform. Strong in workflow management for outpatient radiology operations; less focused on deep structured template capabilities.

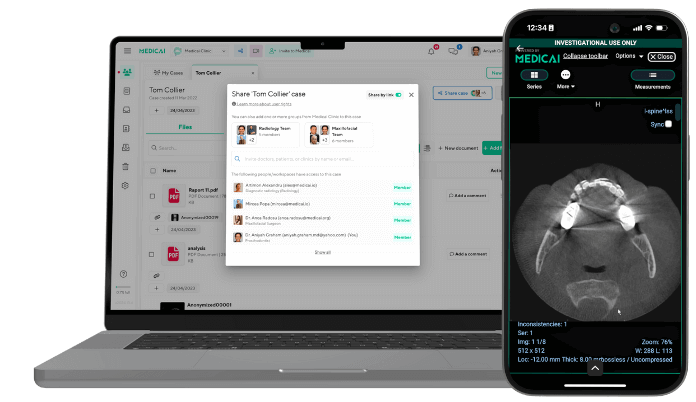

Medicai’s structured reporting module is built directly into the Medicai cloud PACS platform — the viewer and structured report template open in synchronized panels within the same application, measurements from the viewer automatically populate template fields, and the signed report triggers the HL7 ORU workflow without leaving the Medicai environment. The platform includes a library of ready-made radiology report templates with AI-powered dictation, as well as a customizable template builder for practice-specific needs. For practices already using Medicai Cloud PACS, the integrated structured reporting module eliminates the need for a separate reporting platform and the integration complexity that arises when the viewer and reporter are different products. For practices evaluating a new cloud PACS that includes structured reporting as a native capability, the total cost of ownership is meaningfully lower than a two-product deployment. See the structured reporting for radiology product page for the full feature set and a free 14-day trial.

Frequently Asked Questions

What is structured radiology reporting?

Structured radiology reporting is a methodology for producing radiology reports using predefined templates that specify mandatory sections, required data fields, measurement formats, and finding classification systems. Instead of dictating a free-text narrative, the radiologist works through a template that prompts for each required element — indication, technique, comparison studies, findings by anatomical region, measurements, impression, and follow-up recommendation. The output is a report with a consistent structure and terminology that can be used for clinical decision support, billing, quality measurement, and data analytics in ways that free-text reports cannot. The ACR and RSNA have both published recommendations supporting structured reporting as the standard for diagnostic radiology practice.

What sections must a radiology report include?

A complete radiology report includes six mandatory sections: clinical indication (the specific clinical question the imaging was ordered to address), technique (acquisition parameters and any protocol deviations), comparison studies (prior imaging reviewed with study dates and modalities), findings (systematic coverage of all relevant anatomical regions with explicit normal and abnormal observations), quantitative measurements (structured numeric fields for all clinically meaningful measurements), and impression (numbered conclusions ordered by clinical significance with follow-up recommendations and an urgency classification). The ACR Practice Parameters for Communication of Diagnostic Imaging Findings specify that the impression must address the clinical indication directly and include actionable follow-up recommendations where clinically indicated.

What does “impression” mean on a radiology report?

The impression is the radiologist’s clinical conclusion — a numbered summary of the most clinically significant findings, ordered from most to least important, written in plain language that the ordering clinician can act on without radiology training. The impression is the section of the radiology report that the referring physician reads first and most carefully. It must directly address the clinical question posed in the indication, explicitly state whether the primary finding supports or argues against the suspected diagnosis, and include a specific follow-up recommendation with a timeframe when one is warranted. An impression that says “clinical correlation recommended” without specifying what clinical correlation should be performed and the recommended timeframe is incomplete and fails to meet the referring clinician’s need for actionable guidance.

What is the best radiology reporting software?

The best radiology reporting software depends on what the practice primarily needs. For voice dictation accuracy with AI-assisted impression drafting in a high-volume US radiology environment, Rad AI’s Omni Reporting is the strongest current option. For deep structured template libraries with classification system integration, RadioReport is purpose-built for template-driven structured reporting. For enterprise hospital departments requiring an established platform with broad RIS integration, Nuance PowerScribe (Dragon Medical One) has the largest installed base. For practices that want structured reporting integrated directly with a cloud PACS — avoiding the workflow friction of separate viewer and reporting applications — Medicai’s integrated platform combines both in a single subscription. The most common evaluation mistake is selecting a platform based solely on AI dictation accuracy without testing PACS integration quality, which is the primary driver of adoption versus abandonment in the first year.

How does AI assist radiology reporting?

AI assists radiology reporting at three levels. Level 1 is speech recognition optimized for radiology vocabulary — AI that converts dictated speech to text with high accuracy for medical imaging terminology, reducing keyboard entry without changing the report structure. Level 2 is NLP-based template population — AI that extracts structured data from dictated or transcribed text and populates template fields, such as detecting a measurement and a classification in dictated text and entering them into the corresponding report template fields automatically. Level 3 is AI-generated draft reports — AI that uses large language models to generate a complete report draft from radiologist finding input, which the radiologist reviews and signs. Each level carries a different accuracy profile and a different relationship with radiologist review time. The radiologist’s clinical and medico-legal responsibility for the content of the signed report does not change regardless of which AI level produced the draft.

Where do I get radiology report templates?

The RSNA’s RadReport library at radreport.org provides more than 90 structured reporting templates covering common radiology study types, developed collaboratively with specialty societies and coded in the RSNA Reporting Initiative XML schema. These templates are freely available and can be imported into compatible reporting platforms, providing a validated starting framework that practices can adapt to their specific study mix and institutional requirements rather than building from scratch. Specialty-specific template resources include the ACR’s RADS templates (BI-RADS, Lung-RADS, LI-RADS, TIRADS, PI-RADS) published through the ACR’s clinical resources portal, and European Society of Radiology (ESR) iGuide templates for European practice contexts.

How does structured reporting reduce radiology report turnaround time?

Structured reporting reduces turnaround time through two mechanisms. The first is template-guided composition: the radiologist works through predefined sections rather than composing the report format in real time alongside the clinical content, reducing the cognitive overhead of report generation, particularly for complex multi-finding studies. The second is AI-assisted dictation and field population: speech recognition reduces keyboard entry time, and NLP-assisted template population reduces the time spent manually entering structured measurement data. The turnaround time reduction is most pronounced for high-complexity study types with multiple required measurements and classification assignments — chest CT for lung nodule surveillance, abdominal MRI for liver lesion characterization — where the template and AI assistance together can reduce report generation time by 20–35% compared to free-text dictation for the same study type. For simple study types — such as two-view chest X-rays with normal findings — the turnaround time impact of structured reporting is smaller because the free-text report is also brief.

Conclusion

Structured radiology reporting is a clinical quality program that requires a technology stack to function, but the technology does not determine the outcome. The templates determine whether the report serves the clinicians who use it. The PACS integration determines whether radiologists adopt the workflow or circumvent it. The measurement program determines whether the practice knows whether the program is working. And the AI tools — dictation, NLP extraction, draft generation — reduce the mechanical burden of producing structured reports without substituting for the clinical intelligence that makes a radiology report useful.

The practices that achieve lasting value from structured reporting — consistent improvement in turnaround time, measurable reduction in referring clinician clarification calls, automated charge capture that reduces coding errors — are those that design templates with input from referring clinical departments, enforce mandatory fields as hard workflow gates, choose a reporting platform with genuine integrated PACS viewer synchronisation rather than a parallel application, and measure outcomes against a documented baseline. The evidence base supporting structured reporting over free-text dictation is established. The implementation decisions that determine whether that evidence translates to practice are what this guide is designed to support.

Related Articles

Lets get in touch!

Learn more about how Medicai can help you strengthen your practice and improve your patients’ experience. Ready to start your Journey?

Book A Free Demo