AI Radiology Workflow: How AI Fits Into Every Stage of Reading, Reporting, and Worklist Management

The AI radiology workflow is the integration of machine learning models into the radiologist’s reading workflow — at the worklist, during image review, during measurement and segmentation, during report generation, and in the operational scaffolding around the reading task. AI does not replace the radiologist. It operates as a computational layer within the existing workflow: AI processes the DICOM image data at acquisition, reorders the worklist by clinical urgency, flags potential findings during reading, calculates quantitative measurements, drafts report content, and surfaces prior studies and hanging protocols — leaving the radiologist to verify, interpret, and sign even remotely through teleradiology.

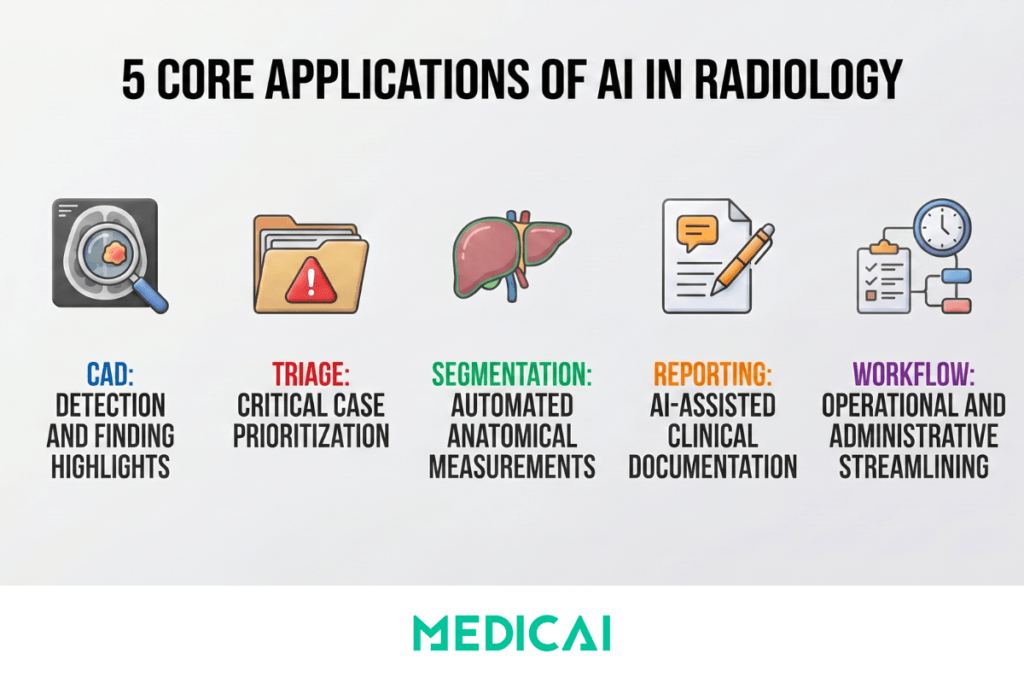

AI fits into the radiology workflow across five distinct stages, each addressing a different operational moment: worklist triage and prioritization, computer-aided detection (CAD) during reading, image segmentation for quantitative measurement, reporting assistance for the diagnostic report, and workflow automation for the operational scaffolding. These five stages differ in what they do, where they sit in the workflow timeline, how they are regulated by the FDA and equivalent bodies, and how mature they are in clinical deployment. Understanding the distinction between them is the prerequisite for evaluating what AI can realistically deliver in a specific radiology practice — and what it cannot.

This guide covers the complete AI radiology workflow: how AI fits into each of the five workflow stages, what measurable performance the FDA-cleared products have demonstrated in clinical validation studies, where the limitations and regulatory boundaries stand today, and how to evaluate AI tools at each stage of the workflow for a specific radiology practice.

The Five Stages of the AI Radiology Workflow

1. Triage and Prioritization — AI Before Reading

Triage AI analyses incoming imaging studies and reorders the radiologist’s reading worklist based on clinical urgency — moving studies with suspected critical findings to the top of the queue and pushing routine studies further down. The most clinically significant deployment of triage AI is in stroke and pulmonary embolism workflows, where every minute of delay in radiologist review translates to a measurable clinical outcome difference.

A typical triage workflow operates as follows. A head CT arriving at 2 AM in a busy emergency department is processed by the triage AI within 60 seconds of acquisition. The AI detects imaging features consistent with large-vessel occlusion stroke, assigns a confidence score, and reorders the worklist to place the study at position 1 — ahead of routine studies queued for the same radiologist. The radiologist reviews the flagged study within 5 minutes rather than the 30–45 minutes that worklist-order reading would have produced. The time saved directly reduces door-to-treatment times for stroke patients, where the established relationship between treatment delay and neurological outcome makes triage AI clinically valuable beyond its workflow efficiency.

Named products in the triage category include Viz.ai (FDA-cleared for large vessel occlusion stroke detection and notification), Aidoc (multi-pathology triage covering intracranial hemorrhage, pulmonary embolism, pneumothorax, and others), and RapidAI (stroke and pulmonary embolism triage). A 2022 study published in Radiology found that AI-assisted triage reduced time-to-notification for critical findings by an average of 60% in emergency radiology settings.

2. Computer-Aided Detection (CAD) — AI During Image Review

Computer-aided detection refers to AI systems that identify and mark potential findings on medical images — flagging suspicious lesions, nodules, fractures, hemorrhages, or other pathological patterns for the radiologist’s review. CAD does not diagnose. It highlights areas of the image that require the radiologist’s attention, leaving the diagnostic interpretation and clinical decision to the physician. CAD is the most mature and most widely deployed category of AI in radiology, with extensive clinical validation across multiple body parts and imaging modalities.

Clinical applications of CAD span the full range of radiology subspecialties. In thoracic radiology, lung nodule detection on chest CT supports Lung-RADS classification and reduces missed lung cancers in screening programs. In breast imaging, mammography CAD detects masses and microcalcifications and supports BI-RADS classification. In emergency radiology, fracture-detection AI flags potential bone injuries on X-ray, and intracranial hemorrhage-detection AI identifies suspicious findings on head CT. Named FDA-cleared products in the CAD category include Aidoc (triage and detection across multiple body parts), Lunit INSIGHT CXR (chest X-ray analysis), and iCAD ProFound AI (mammography).

The FDA maintains a public list of AI/ML-enabled medical devices — as of 2024, over 1,000 devices have received FDA clearance or approval, with radiology representing the single largest category, dominated by CAD and triage applications.

3. Image Segmentation — AI for Quantitative Measurement

Image segmentation AI automatically delineates anatomical structures or pathological regions within medical images — outlining organ boundaries, tumor volumes, vessel anatomy, or specific tissue types to support measurement, treatment planning, and quantitative analysis. Where CAD identifies what might be a finding, segmentation defines exactly where it is and how large.

The clinical applications of segmentation AI are weighted toward quantitative measurement tasks where manual contouring is time-intensive. In cardiac MRI, automated segmentation of left and right ventricular volumes for ejection fraction calculation reduces analysis time from 20–30 minutes of manual contouring to under 30 seconds with AI segmentation, with measurement accuracy correlation of r = 0.95 to 0.98 against expert manual reference standards. In brain MRI, automated hippocampal volume measurement supports dementia assessment and monitoring of Alzheimer’s disease progression. In radiation oncology, automated contouring of organs-at-risk and target tumor volumes on CT simulation studies — a task that traditionally requires 2 to 4 hours of dosimetrist or radiation oncologist time per patient — is now completed in minutes with AI segmentation tools.

Named products in the segmentation category include Tempus Arterys (cardiac MRI segmentation, FDA-cleared), CorticoMetrics (brain volumetric analysis), and Therapixel (automated organ contouring for radiation oncology). The clinical maturity of segmentation AI is highest in cardiac and radiation therapy applications, with active research validating its use in liver, kidney, and lung segmentation for oncology staging and treatment response monitoring.

4. Reporting Assistance — AI in Report Generation

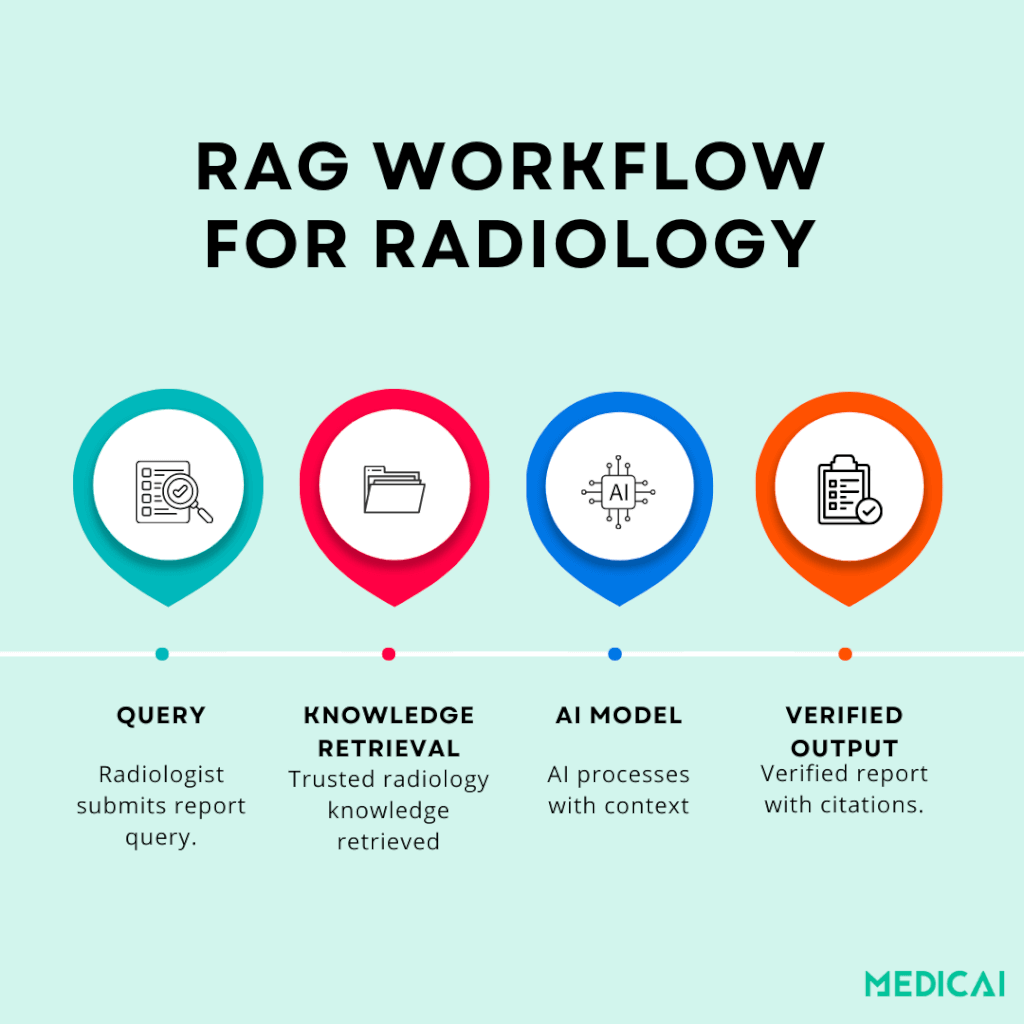

Reporting assistance AI supports the radiologist in producing the written diagnostic report — from speech recognition optimized for radiology vocabulary, through NLP-based structured template population, to AI-generated draft reports and impression sections. This is the application category where the radiology AI conversation has shifted most rapidly over the past two years, driven by the maturation of large language models and the development of retrieval-augmented generation methods that improve clinical accuracy.

The four levels of reporting assistance correspond to increasing AI involvement in the report content. AI-optimized speech recognition — Nuance PowerScribe and Dragon Medical One — transcribes radiologist dictation with vocabulary accuracy calibrated for radiology terminology. This is the most mature reporting AI, deployed across the majority of US radiology practices. NLP-based template population extracts measurements, classifications, and findings from dictated text to populate structured report template fields automatically — the radiologist dictates findings naturally, and the AI structures the output into a standardized template. AI-generated impression drafts — products like Rad AI Impressions produce a draft impression section based on the radiologist’s findings, which the radiologist then reviews, edits, and signs. RAG-enhanced report generation represents the current research frontier: AI report generation models supplemented with retrieval from validated clinical literature and institutional protocols, producing draft reports with citations supporting each finding.

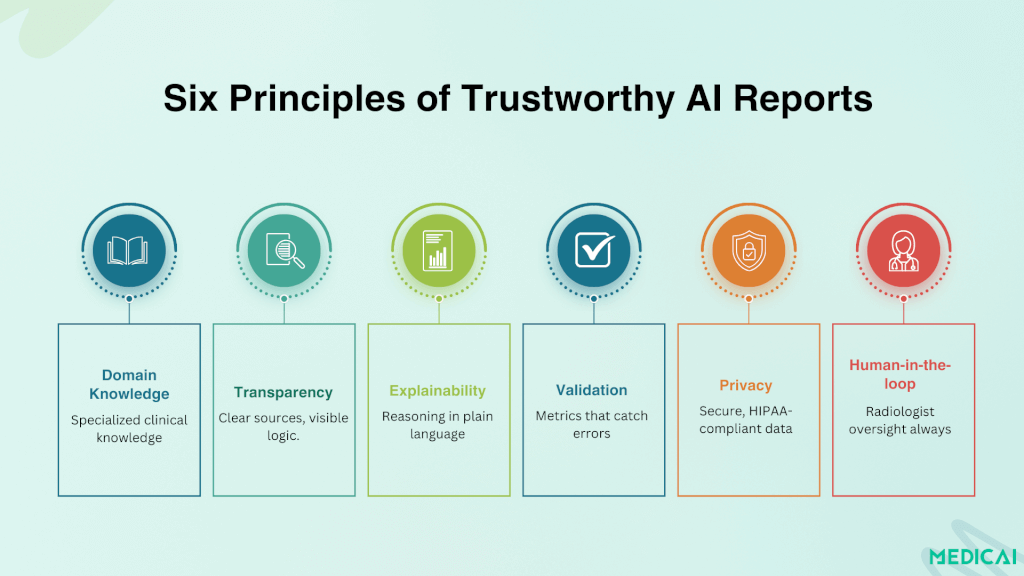

The trust and verifiability of AI-generated reports are the central concern in this application category. A Harvard Medical School analysis found that common evaluation metrics frequently failed to catch serious clinical mistakes in AI-generated radiology reports — automated scoring systems designed to assess how “good” an AI report looks failed to reliably identify clinically significant errors. Newer evaluation metrics — RadGraph F1 and RadCliQ — are being developed to align with human clinical judgment, ensuring AI report output is measured for diagnostic accuracy rather than linguistic plausibility.

The research evidence for RAG-enhanced reporting is encouraging. A study published in RadioGraphics evaluated GPT-4 augmented with trauma radiology knowledge from the Top Ten Reading List and achieved 100% correct diagnoses, 96% classification accuracy, and 87% grading accuracy. Plain GPT-4 without retrieval support lagged significantly. Clinician feedback consistently rated the retrieval-augmented model at a median trust score of 5.0 for the explanations and cited sources it produced. A similar system developed for gastrointestinal radiology — the Gastrointestinal Imaging Chatbot — achieved 78% accuracy in differential diagnosis with retrieval augmentation, compared to 54% for generic GPT-4 without RAG.

The architecture pattern for trustworthy AI-generated reporting — domain-specific knowledge bases, retrieval-augmented generation, explainability through source citation, validated evaluation metrics, privacy-safe deployment in institutional environments, and human-in-the-loop oversight, with the radiologist reviewing and signing every AI-drafted report — is covered in depth in the Medicai structured radiology reporting guide.

5. Workflow Automation — AI in the Operational Scaffolding

Workflow automation AI handles the operational and administrative functions surrounding the diagnostic reading workflow — study routing, hanging protocol selection, prior-study matching, quality assurance, and billing code suggestions. Unlike CAD, triage, segmentation, and reporting AI — which act on the image or the report content — workflow automation acts on the metadata and the operational scaffolding around the reading task.

Clinical examples include an automated hanging protocol application based on AI analysis of study type and clinical context, replacing reliance on DICOM metadata tags that are inconsistently populated across institutions and modalities — covered in the Medicai hanging protocols guide. Automated prior study retrieval using AI patient matching handles name variations, MRN format changes, and merged patient records better than exact-match algorithms. AI-assisted charge capture proposes CPT codes based on the structured content of the completed report. Quality assurance AI flags reports with internal inconsistencies — measurements that disagree with the impression, classifications that do not match the imaging findings, or required structured fields left incomplete.

Medicai’s cloud PACS, with configurable hanging protocols, AI-assisted structured reporting, and integrated workflow automation, addresses this category directly — the viewer and reporter integration described in the structured reporting guide is the workflow automation layer this section describes.

What AI Can Do in Radiology: Measurable Benchmarks from Clinical Evidence

Vendor marketing claims about AI radiology performance are abundant. Peer-reviewed clinical validation data are more limited but more useful. The following benchmarks come from published clinical studies and FDA-cleared product validation data — the figures a radiology practice administrator can reference when evaluating AI tools.

Chest X-ray analysis. Lunit INSIGHT CXR demonstrated 97.9% sensitivity and 95.1% specificity for detecting critical chest X-ray findings in a multi-center validation study published in Lancet Digital Health. FDA-cleared for clinical use across pneumothorax, pneumonia, pulmonary nodules, pleural effusion, and other critical findings on chest radiography.

Mammography. iCAD ProFound AI demonstrated a 9.6% increase in cancer detection rate, accompanied by a 5.6% reduction in recall rate, in a clinical study published in Radiology. The performance was measured against a control group of radiologists reading without AI assistance — meaning the gain came from radiologist and AI collaborative reading, not from AI alone.

Stroke triage. Viz.ai reduced door-to-treatment time for large vessel occlusion stroke by an average of 26 minutes in multi-center deployment studies — a clinically significant improvement given the established time-to-treatment relationship with neurological outcome in stroke. FDA-cleared and CMS-reimbursed under the New Technology Add-on Payment program for stroke imaging.

Cardiac MRI segmentation. AI-assisted ventricular volume calculation shows correlations of r = 0.95-0.98 with expert manual segmentation across multiple validation studies — reducing analysis time from 20-30 minutes of manual contouring to under 30 seconds per study while preserving measurement accuracy within the inter-reader variability range of expert manual segmentation.

Burnout and workflow. A 2024 JAMA Network Open study — “Artificial Intelligence and Radiologist Burnout” — found statistically significant reductions in radiologist burnout scores associated with AI-assisted workflow tool deployment. The mechanism was cognitive load reduction through automation of non-interpretive tasks rather than throughput increase — covered in detail in the Medicai radiologist burnout guide.

Limitations and Regulatory Status

The capability benchmarks above are real and clinically validated. The limitations that follow are equally real and frequently understated in vendor marketing.

Current Limitations

Dataset bias and generalisability. AI models trained on data from a single institution, geographic region, or patient population may underperform when deployed in a different demographic, geographic, or clinical context. A chest X-ray model trained on a US academic medical center population may underperform on imaging from a Southeast Asian or African population where disease prevalence patterns, body habitus distributions, and acquisition protocols differ. The generalisability question must be answered for the specific deployment context, not assumed from the original validation study.

Edge case performance. AI performs best on common, well-represented pathology in the training data. Rare findings, atypical presentations, and incidental findings that fall outside the model’s training distribution are where AI is most likely to miss — and where radiologist expertise remains irreplaceable. The radiologist’s value is highest in cases where AI is most likely to misclassify, which is the inverse of where most workload pressure lies.

Integration friction in deployment. An AI tool that performs well in a research validation study may underperform in clinical deployment because its integration with the PACS viewer is poor, inference time adds latency to the reading workflow, or its output format does not match the radiologist’s reporting structure. The performance gap between published validation and clinical reality is consistently underestimated in procurement decisions.

Hallucination risk in generative AI. LLMs used for report generation can produce plausible-sounding but clinically incorrect statements — generated findings, fabricated measurements, or impressions that do not match the underlying findings. The RAG methodology described in the reporting assistance section addresses this specifically by grounding generation in retrieved evidence, but hallucination risk remains non-zero even with retrieval augmentation. Every AI-generated report requires radiologist review and approval — the human-in-the-loop is not optional for generative AI in clinical contexts.

Regulatory Status

The FDA has cleared over 1,000 AI/ML-enabled medical devices as of 2024, with radiology representing the largest single category. Most cleared devices are CAD or triage tools operating under 510(k) clearance — the predicate device equivalence pathway — which means the device has demonstrated substantial equivalence to a previously cleared device rather than undergoing the full pre-market approval process required for novel high-risk devices.

The FDA’s proposed regulatory framework for AI/ML-based Software as a Medical Device — the Predetermined Change Control Plan — is designed to allow iterative model updates without requiring new clearance for each version, addressing the structural mismatch between traditional medical device regulation (which assumes a fixed device) and AI models that improve through retraining. The framework is in active development but not yet fully implemented across all device categories.

In the European Union, AI medical devices fall under the Medical Device Regulation (MDR) 2017/745, with most radiology AI tools classified as Class IIa or Class IIb devices requiring conformity assessment by a Notified Body. The MDR’s requirements for clinical evaluation, post-market surveillance, and risk classification are more stringent than those of the FDA 510(k) pathway, leading some AI vendors to launch products in the US market first and pursue EU CE marking subsequently.

AI in Radiology Compared: Five Application Types at a Glance

The table below summarises the five application categories across the four dimensions that matter for clinical evaluation: what the AI does, where it sits in the workflow, how mature it is in clinical deployment, and what regulatory pathway has cleared the leading products.

| AI application type | What it does | Workflow position | Named FDA-cleared products | Clinical maturity and validation |

|---|---|---|---|---|

| Computer-aided detection (CAD) | Identifies and marks potential findings — pulmonary nodules, fractures, intracranial haemorrhages, mammographic masses — for radiologist review and verification | During image review — AI overlays appear on the PACS viewer alongside the original DICOM images, flagging regions of interest for the radiologist | Lunit INSIGHT CXR (chest X-ray), iCAD ProFound AI (mammography), Aidoc (multi-pathology) | Most mature category — 1,000+ FDA-cleared AI/ML devices, with chest X-ray AI demonstrating 97.9% sensitivity and 95.1% specificity in Lancet Digital Health validation studies |

| Triage and prioritisation | Analyses incoming studies at acquisition and reorders the radiologist’s reading worklist — moving studies with suspected critical findings to the top of the queue | Before radiologist review — processes studies within 60 seconds of acquisition and reprioritises the worklist before the radiologist opens any study | Viz.ai (large vessel occlusion stroke), Aidoc (multi-pathology triage), RapidAI (stroke and pulmonary embolism) | Mature — FDA-cleared and clinically deployed across stroke, PE, pneumothorax, and intracranial haemorrhage; Viz.ai reduced door-to-treatment time for stroke by 26 minutes in multi-centre studies |

| Image segmentation | Automatically delineates anatomical structures or pathological regions — organ boundaries, tumour volumes, vessel anatomy, ventricular chambers — to support quantitative measurement | During or after image review — produces quantitative measurements supporting diagnosis, treatment planning, and longitudinal comparison | Tempus Arterys (cardiac MRI), CorticoMetrics (brain volumetrics), Therapixel (radiation therapy contouring) | Established — cardiac MRI AI segmentation shows r = 0.95–0.98 correlation with expert manual contouring; reduces analysis time from 20–30 minutes to under 30 seconds per study |

| Reporting assistance | Supports report generation — radiology-optimised speech recognition, NLP-based structured template population, AI-drafted impressions, and RAG-enhanced report generation with cited sources | After image review — processes the radiologist’s dictation or findings to produce the structured report delivered through HL7 ORU to the EHR | Nuance PowerScribe (dictation), Rad AI Impressions (AI-drafted impressions), RAG-enhanced systems (research stage) | Mixed maturity — AI dictation is fully mature and widely deployed; AI-drafted impressions are in commercial rollout; RAG-enhanced report generation achieved 100% diagnostic accuracy in RadioGraphics validation studies but remains pre-commercial |

| Workflow automation | Automates operational tasks surrounding the reading workflow — hanging protocol selection, prior study matching, quality assurance, CPT code suggestion for charge capture | Throughout the workflow — operates in the background from study acquisition through report distribution, without direct radiologist interaction | Native cloud PACS features (Medicai and other cloud-native platforms); fewer standalone third-party products than other AI categories | Emerging — integrated into modern cloud PACS platforms as built-in capability rather than separately procured AI; JAMA Network Open 2024 study found workflow AI tools were associated with measurable radiologist burnout reduction |

Pros and Cons of AI in Radiology

The strongest case for AI in radiology rests on three specific, evidence-based benefits. Faster turnaround for critical findings through triage AI — the Viz.ai 26-minute reduction in door-to-treatment time for stroke translates directly to better neurological outcomes. Improved detection accuracy in screening contexts — the iCAD ProFound AI’s 9.6% increase in cancer detection rate translates to earlier-stage diagnoses with a materially better prognosis. Reduced radiologist cognitive load on non-interpretive tasks — the workflow automation and reporting assistance categories address the administrative burden that the Medscape 2023 data identifies as the primary contributor to radiologist burnout.

The strongest case against premature AI deployment rests on equally specific concerns. Generalisability gaps between validation populations and deployment populations produce performance variability that vendor marketing rarely discloses. Integration friction at the PACS, RIS, and EHR interfaces disrupts deployment-stage workflows, eroding the theoretical efficiency gain. Hallucination risk in generative reporting AI requires that every output be reviewed by the radiologist, meaning the radiologist’s role does not disappear — it shifts toward verification and quality control of AI-generated content. And the financial case for many AI tools remains underdocumented at the per-practice level, with vendor ROI claims often based on case studies that may not reflect average deployment outcomes.

The Future of AI in Radiology

Three directions of development are visible in current research and product roadmaps. First, the consolidation of multi-pathology AI: rather than separate single-finding detection tools, integrated platforms that analyze a study for multiple potential pathologies in a single inference pass — reducing both the integration burden and the alert fatigue that comes from multiple separate AI tools each generating their own notifications. Second, the maturation of generative reporting AI: RAG-enhanced report generation moving from research validation into clinical pilot programs, with the trust framework — domain-specific knowledge bases, source citation, validated evaluation metrics, human-in-the-loop oversight — becoming the standard architecture rather than the research frontier. Third, the integration of AI as a native PACS capability: cloud-native PACS platforms incorporating AI tools as built-in features rather than third-party integrations, eliminating the deployment friction that has constrained AI adoption in smaller radiology practices and imaging centers.

For radiology practices evaluating where to invest in AI today, the practical sequence is: deploy triage AI first for the categories where time-to-finding has direct clinical outcome impact (stroke, intracranial haemorrhage, PE), deploy CAD where validated for the practice’s modality and case mix, deploy reporting assistance with explainability and human oversight built in, and treat workflow automation as a PACS infrastructure decision rather than a separate AI procurement.

Frequently Asked Questions

How does AI fit into the radiology workflow?

AI fits into the radiology workflow at five stages: it triages the worklist before reading, flags findings during image review (CAD), segments anatomy for quantitative measurement, drafts the diagnostic report, and automates operational tasks like hanging protocol selection and prior-study matching across the workflow.

What is AI radiology workflow optimization?

AI radiology workflow optimization is the integration of machine learning tools across the radiologist’s reading workflow to reduce non-interpretive task time, prioritize critical findings for faster turnaround, and shift cognitive load away from administrative tasks toward diagnostic interpretation — without replacing the radiologist’s clinical judgment.

How does AI integrate with PACS in the radiology workflow?

AI integrates with PACS in three workflow patterns: triage AI reorders the worklist before the radiologist opens any study, CAD AI overlays findings on the PACS viewer during image review, and reporting AI receives the radiologist’s findings to draft the structured report — all within the same PACS interface.

Is AI currently being used in radiology?

Yes — extensively. As of 2024, the FDA has cleared over 1,000 AI/ML-enabled medical devices, with radiology representing the largest single category. AI tools are deployed across multiple application types: computer-aided detection on chest X-ray, CT, and mammography; triage AI for stroke, pulmonary embolism, and intracranial hemorrhage detection; automated segmentation for cardiac MRI and radiation therapy planning; AI-assisted dictation and structured reporting; and workflow automation integrated into modern cloud PACS platforms. Adoption is highest in large academic medical centers and growing in community radiology practices and imaging centers as cloud-native PACS infrastructure simplifies deployment.

Will AI replace radiologists?

The clinical and research consensus is that AI augments rather than replaces radiologists. AI excels at high-volume, narrow-task pattern recognition on common pathology — exactly the tasks where cognitive fatigue contributes most to radiologist error rates. AI underperforms on rare findings, atypical presentations, complex multi-finding studies requiring integration of clinical context, and on communication and clinical decision-support functions that define the radiologist’s role beyond pure interpretation. The Neiman Health Policy Institute projects a US radiologist shortage of 42,000 to 95,000 FTE by 2055 — AI is not closing this gap, and the demand for radiologist judgment, supervision of AI outputs, and complex case interpretation is increasing rather than decreasing.

What are the pros and cons of AI in radiology?

The primary benefits are faster turnaround for critical findings through triage AI, improved detection accuracy in screening contexts (with mammography AI showing roughly a 10% increase in cancer detection rate in validation studies), and reduced radiologist cognitive load on non-interpretive workflow tasks. The primary concerns are generalisability gaps between validation populations and real-world deployment populations, integration friction at the PACS and EHR interfaces that erodes theoretical efficiency gains, hallucination risk in generative reporting AI that requires continuous radiologist verification, and underdocumented financial ROI at the per-practice level. The balanced assessment is that AI delivers measurable clinical value in specific application categories when properly deployed and overseen, but produces deployment disappointment when adopted broadly without attention to integration, training data fit, and workflow design.

How many FDA-cleared AI radiology devices exist?

As of 2024, the FDA has cleared over 1,000 AI/ML-enabled medical devices across all clinical specialties, with radiology representing the largest single category. The FDA maintains a public database of cleared devices that is regularly updated—it is searchable by device category, manufacturer, and clearance date, and provides the regulatory clearance status, intended use statement, and predicate device for each cleared product.

How does AI integrate with PACS?

AI tools integrate with PACS through several technical interfaces. DICOM-based integration is the most common — the AI receives DICOM studies via the PACS DICOM router, processes them, and returns results either as additional DICOM objects (structured reports, secondary capture images, segmentation overlays) or via a separate API to the radiologist’s worklist. DICOMweb interfaces — WADO-RS, QIDO-RS, STOW-RS — enable cloud-native AI tools to access PACS data through standard HTTP-based APIs, simplifying integration with cloud-hosted AI services. HL7 ORU integration delivers AI-generated structured reports to the RIS and EHR through the same messaging interfaces used for radiologist reports. Modern cloud-native PACS platforms — including Medicai — support all three integration patterns natively, reducing the integration engineering required to deploy AI tools alongside the imaging archive.

Related Articles

Lets get in touch!

Learn more about how Medicai can help you strengthen your practice and improve your patients’ experience. Ready to start your Journey?

Book A Free Demo